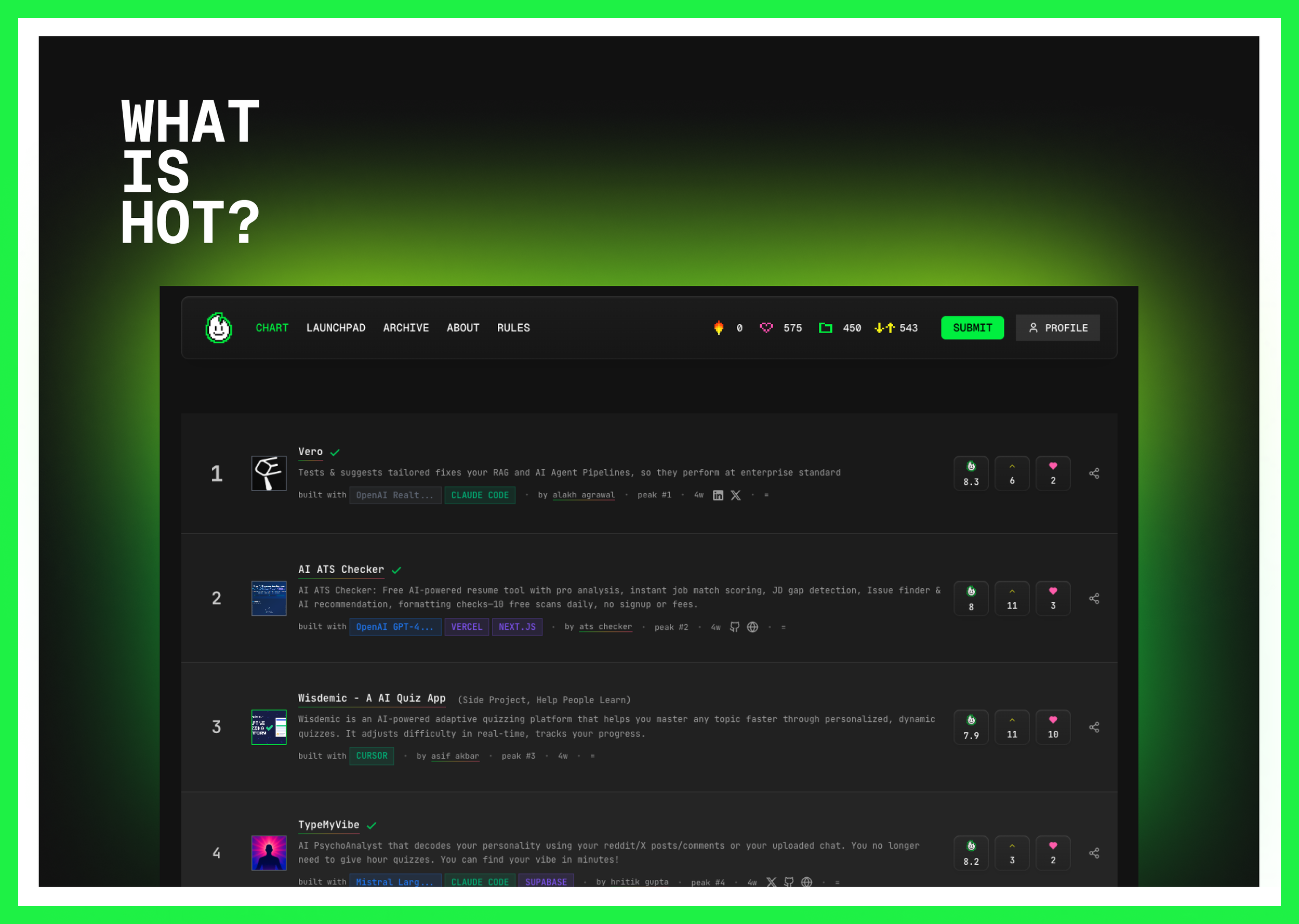

Hot100.ai — A Living Index of What AI Builders Are Actually Shipping

Hot100.ai started as a question more than a product.

I was spending a lot of time exploring AI tools and side projects, and I kept running into the same problem: discovery felt broken. Most directories were scraped, outdated, or optimized for SEO. Launch platforms rewarded who marketed hardest on day one, not who kept improving something useful over time.

I wanted a way to see what builders were actually shipping — week by week — and what was quietly gaining momentum.

So I built Hot100.ai.

The original experiment

The first version was intentionally simple: a weekly Top 100 chart for AI projects, inspired by music charts rather than startup leaderboards. Projects were submitted directly by builders, reviewed manually, and ranked once a week. No growth hacks, no pay-to-play, no scraping.

What surprised me wasn’t just the number of submissions — it was how fragile rankings are. Small mechanics had huge effects. Early projects stuck around forever. Late submissions struggled to surface. Voting alone didn’t tell the full story.

That’s when Hot100 stopped being “a chart” and started becoming a system.

Turning a chart into infrastructure

Over time, Hot100 evolved into a broader discovery and indexing layer:

A growing database of 1,000+ AI projects with structured metadata

A consistent weekly refresh cadence to create trust and rhythm

An AI-assisted evaluation layer (“Flambo”) to assess design quality, innovation, and usefulness alongside community votes

Historical snapshots so momentum could be tracked, not just measured once

A discovery layer that let projects live beyond their launch week

A big focus became balance: automation vs judgement, speed vs quality, signal vs noise. Some things are better handled by AI. Others still need a human pass. Designing that boundary became one of the most interesting parts of the project.

What’s been achieved so far

Hot100.ai now tracks over a thousand projects across categories like dev tools, writing, agents, health, and productivity. The weekly Top 100 has run consistently for months. A public API is live, allowing others to build dashboards, bots, and research tools on top of the data.

Along the way, Hot100 has also surfaced real product challenges: SEO and indexing at scale, category accuracy, trust signals, and how discovery changes when LLMs — not humans — are increasingly the audience.

It’s been a hands-on way to explore how modern products need to think about Answer Engine Optimization, not just traditional search.

Why I keep working on it

Hot100.ai isn’t about predicting winners or creating hype. It’s about building a more honest snapshot of the AI builder ecosystem — one that reflects real work, real iteration, and real momentum.

For me, it’s been a deeply practical project. I’ve designed the UX, built the workflows, shaped the data model, shipped the API, and continuously refined the system based on what breaks in the real world.

Check it out at https://www.hot100.ai/

We launched on Product Hunt